Welcome back. Anthropic just dropped something big for developers (again). The AI lab shipped a new Claude Code feature, putting a team of agents to work on your pull requests. We're seeing a clear shift from coding copilots to autonomous agents that can actually write, review, and fix code on their own.

Also: OpenAI engineer drops the ultimate Codex guide, the production checklist for vibe-coded apps, and what Google's AI Director says engineers should stop worrying about.

Today’s Insights

Powerful new updates and hacks for devs

Nobody agrees on what "AI-native" actually means

How to fork Claude Code mid-session

Trending social posts, top repos, and more

TODAY IN PROGRAMMING

Claude Code now reviews your PRs with a team of agents: The AI lab just shipped Code Review for Claude Code, a multi-agent system that analyzes every pull request, verifies bugs to minimize false positives, and prioritizes findings by severity. Internal testing showed a quality boost, with substantive PR comments jumping from 16% to 54%. It's currently in research preview for Team and Enterprise users, with reviews costing $15 to $25 on average.

JetBrains drops a “council of coding agents”: The IDE maker just unveiled Air in public preview, an agentic development environment that lets developers delegate tasks to Claude, Codex, and Gemini simultaneously. Each agent receives precise codebase context and delivers changes that you can review against your entire project. By default, agents run locally with built-in Docker and Git worktree sandboxing. Try it here.

Anthropic takes DOD to court over supply-chain designation: The AI lab filed two federal lawsuits on Monday to prevent the Department of Defense from labeling it a national security risk. The legal battle began after the Pentagon penalized Anthropic for keeping safety guardrails on autonomous weapons. This dispute has already cost the company over $100 million in lost revenue as partners flee to competitors, while another $180 million in potential enterprise deals remain at risk.

PRESENTED BY LINKUP

Your model is only as good as the web data it retrieves. Yet most teams never properly evaluate their search provider.

Linkup's web search evaluation framework gives you a repeatable method to measure result quality, compare APIs, and ship reliable AI products.

INSIGHT

Nobody agrees on what "AI-native" actually means

Source: The Code, Superhuman.

A word that means everything. "AI-native" is the phrase every engineering team is rallying around, but when Vinay Perneti, VP of Engineering at Augment Code, talked to his team about it, half thought it meant using an agent in the IDE and the other half thought it meant humans setting direction while agents handle execution. Those are two completely different jobs, and most teams are running both at once.

The core of the confusion. That split is really a question of identity. Mo, a developer whose video "I was a 10x engineer. Now I'm useless" hit 4.9M views, puts it more bluntly than most: he used AI to ship a working product in a day and felt nothing toward it. At Perneti's offsite, a breakout group named the same thing — the shift from "proud builder" to "proud coordinator."

The math doesn't add up. What makes the identity shift harder is that the productivity payoff isn't arriving cleanly. A METR study found experienced devs who believed AI made them 20% faster were actually 19% slower. That gap between perception and reality is feeding the anxiety already building underneath.

Silent resistance. This anxiety shows up as passive disengagement, tools that get installed but never used, and rollouts that quietly stall. Perneti's takeaway is that teams stalling on AI adoption usually aren't lacking the right tech. They're lacking a conversation that nobody wanted to have.

IN THE KNOW

What’s trending on socials and headlines

Meme of the day.

Codex Unlocked: An OpenAI engineer just posted a 10-step guide to actually using Codex, and it goes way past the basics most devs stop at.

Four and Done: Your terminal is holding you back. Apparently, you only need these 4 upgrades to fix it entirely.

Shelf Check: A senior AI engineer just shared the 16 repos she actually runs in production. How many are already in your stack?

Security Debt: Vibe-coded apps tend to fail in the same three places. This checklist is what separates a hobby project from one you'd put in front of real users.

Pocket Claw: One dev built a tool that puts Claude Code on your iPhone so you can ship from anywhere.

The Missing Test: A Google DeepMind engineer argues that your AI agent skills need evals for the same reasons your code needs tests. Most devs are skipping this entirely.

Skill Floor: A dev’s viral confession about feeling useless in the AI era prompted Google Cloud AI's Director to share what skills actually still matter for engineers.

AI CODING HACK

How to fork Claude Code mid-session

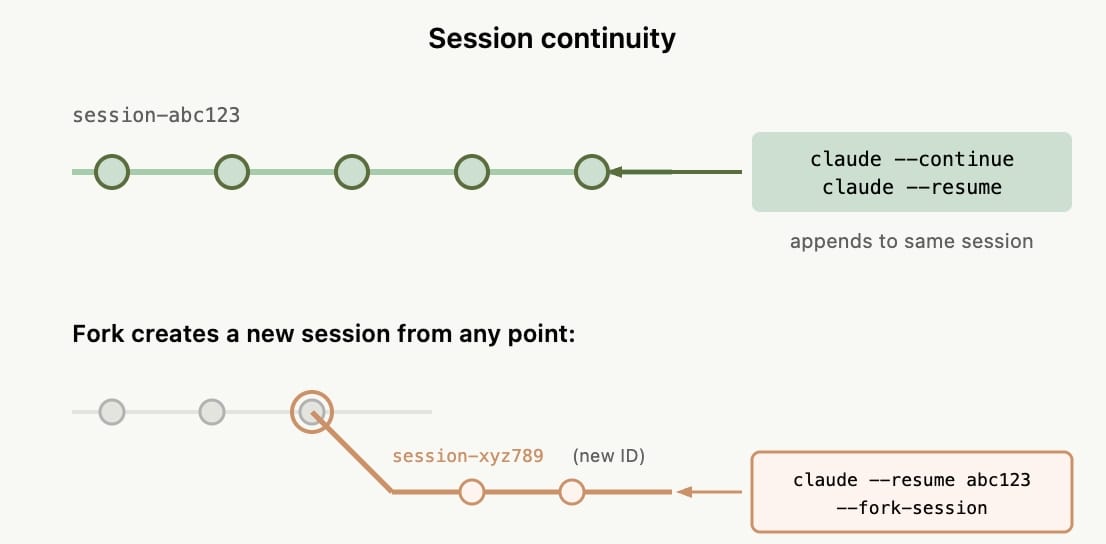

When you're in the middle of a Claude Code session and want to try a different approach, you usually risk losing your context or cluttering the thread with failed attempts. This underrated Claude Code feature helps you fix this.

Simply type “/fork” and Claude Code will branch from that exact point, keeping your original thread completely intact.

The same thing works from the CLI when resuming a session:

claude --continue --fork-sessionThis creates a copy of the session from where you left off, allowing both threads to run independently. If the new approach doesn't pan out, you can simply close the fork and return to the original session without losing any progress.

TOP & TRENDING RESOURCES

Top Tutorial

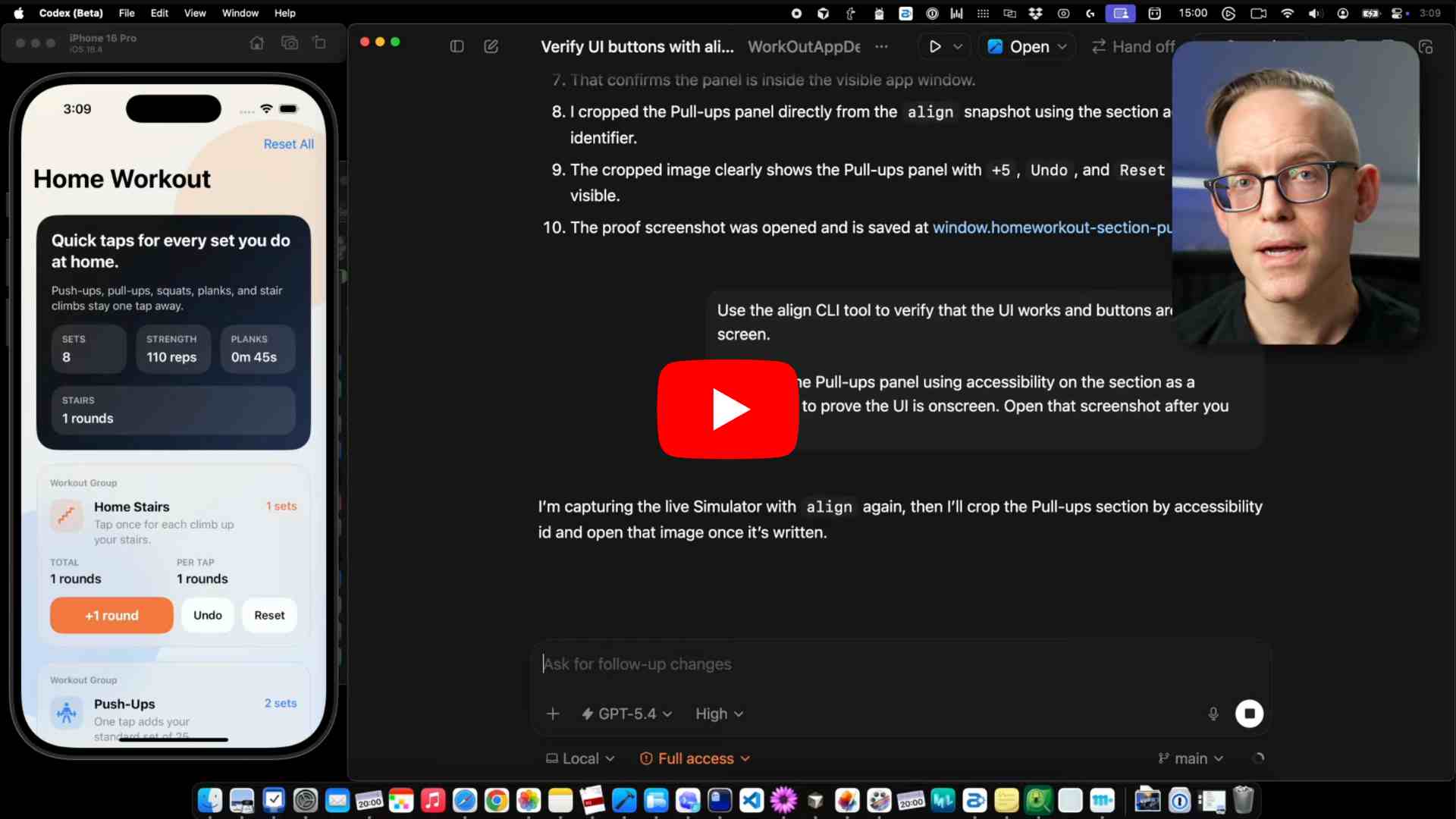

How to build apps with Codex: This tutorial shows developers how to build iOS and macOS apps using Codex and GPT-5.4. You'll learn to set up efficient workflows using tools like Xcode and Ghostty, collaborate with AI agents, troubleshoot issues, and use continuous learning files to improve AI performance over time.

Top Repo

Everything Claude Code: Built by an Anthropic hackathon winner, this repo packs ten months of Claude Code optimizations into a single deployable system. It features pre-configured agents, skills, MCP configs, and security scanners designed to optimize the performance of an AI agent harness.

Trending Paper

Anthropic analyzes Firefox repository with Claude Opus 4.6: Finding hidden security flaws in complex software typically requires massive human effort. However, Claude Opus 4.6 rapidly discovered 22 new vulnerabilities in Firefox, proving AI currently excels at detecting bugs rather than exploiting them.

Grow customers & revenue: Join companies like Google, IBM, and Datadog. Showcase your product to our 200K+ engineers and 100K+ followers on socials. Get in touch.

Whenever you’re ready to take the next step

What did you think of today's newsletter?

You can also reply directly to this email if you have suggestions, feedback, or questions.

Until next time — The Code team