Welcome back. AI agents keep getting smarter, but running multiple tasks at once still feels clunky. Cursor just dropped an update that changes how you manage them.

Also: How a senior engineer built a coding harness in 60 lines, a DeepMind engineer's 8 tips for better agent skills, and what Google expects from Product Managers in 2026.

P.S. We’ve grown to over 230K CTOs, engineering leaders, and developers, and we’re just getting started. We’d love to know how we can bring even more value to your career. Drop us a note in the poll at the end of this issue.

Today’s Insights

Powerful new updates and hacks for devs

Why long-running AI agents keep failing

How to fix Claude Code's unstable behavior

Trending social posts, top repos, and more

TODAY IN PROGRAMMING

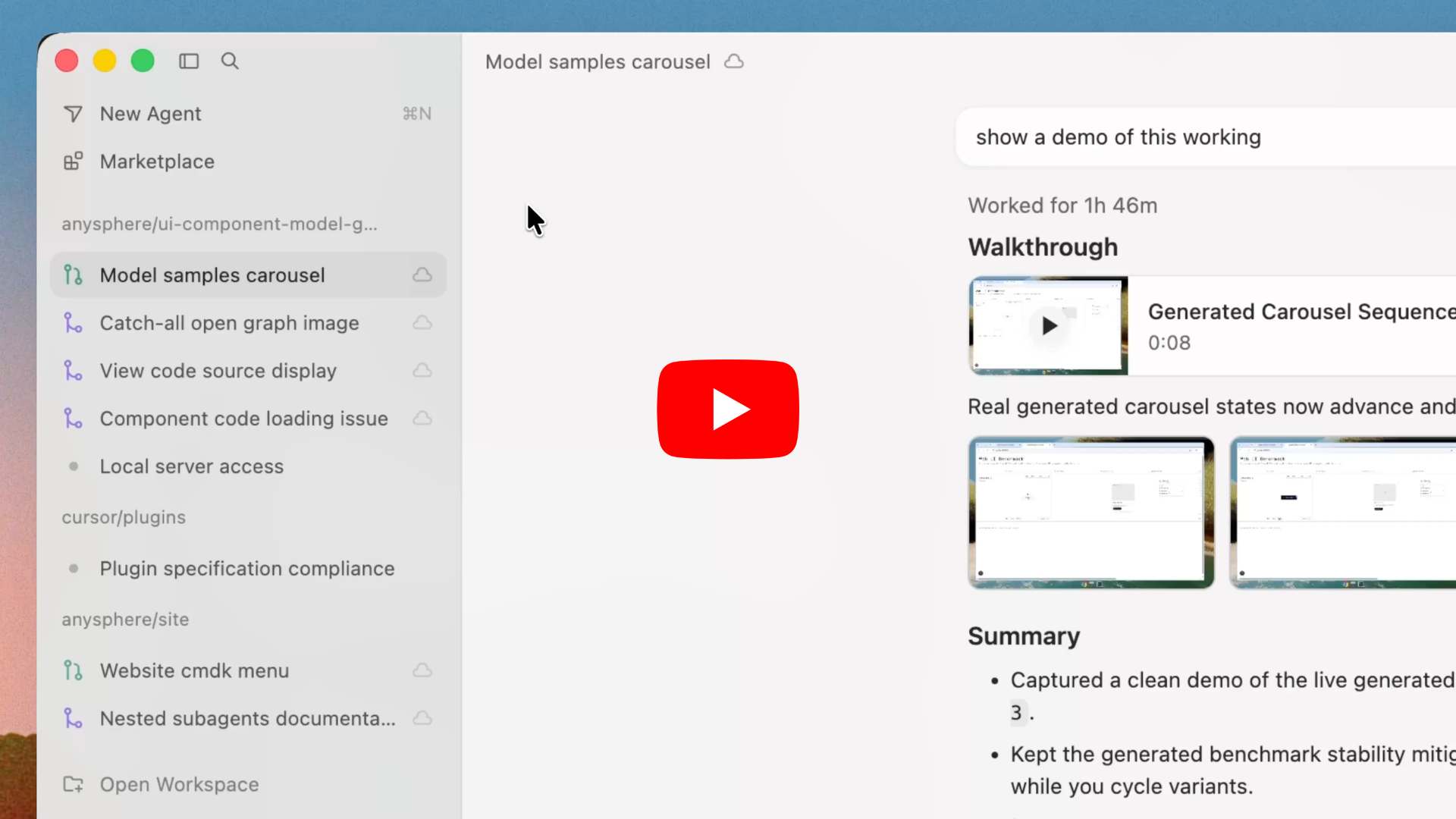

Cursor now lets you manage a fleet of coding agents at once: The AI coding startup just shipped Cursor 3.1, adding a tiled Agents Window for running multiple AI agents in draggable panes. This lets you compare outputs side by side without tab switching and saves your layout for next time. The update also brings batch speech-to-text, branch selection for cloud agents, and improved file search filters. Watch all updates.

Vercel open-sources a platform for cloud coding agents: The frontend cloud platform just unveiled Open Agents, a template for deploying coding agents that run indefinitely in the cloud. Built with their AI SDK and Sandbox tools, it allows teams to run hundreds of agents at once with zero timeouts or setup hassle. Try it here.

Meta is reportedly working on an AI clone of its CEO: The social media giant is developing an AI version of Mark Zuckerberg, training it on his specific mannerisms, tone, and public statements, according to the Financial Times. The AI is also being briefed on his strategic vision so it can step in and advise Meta employees when he's not around.

PRESENTED BY WISPR

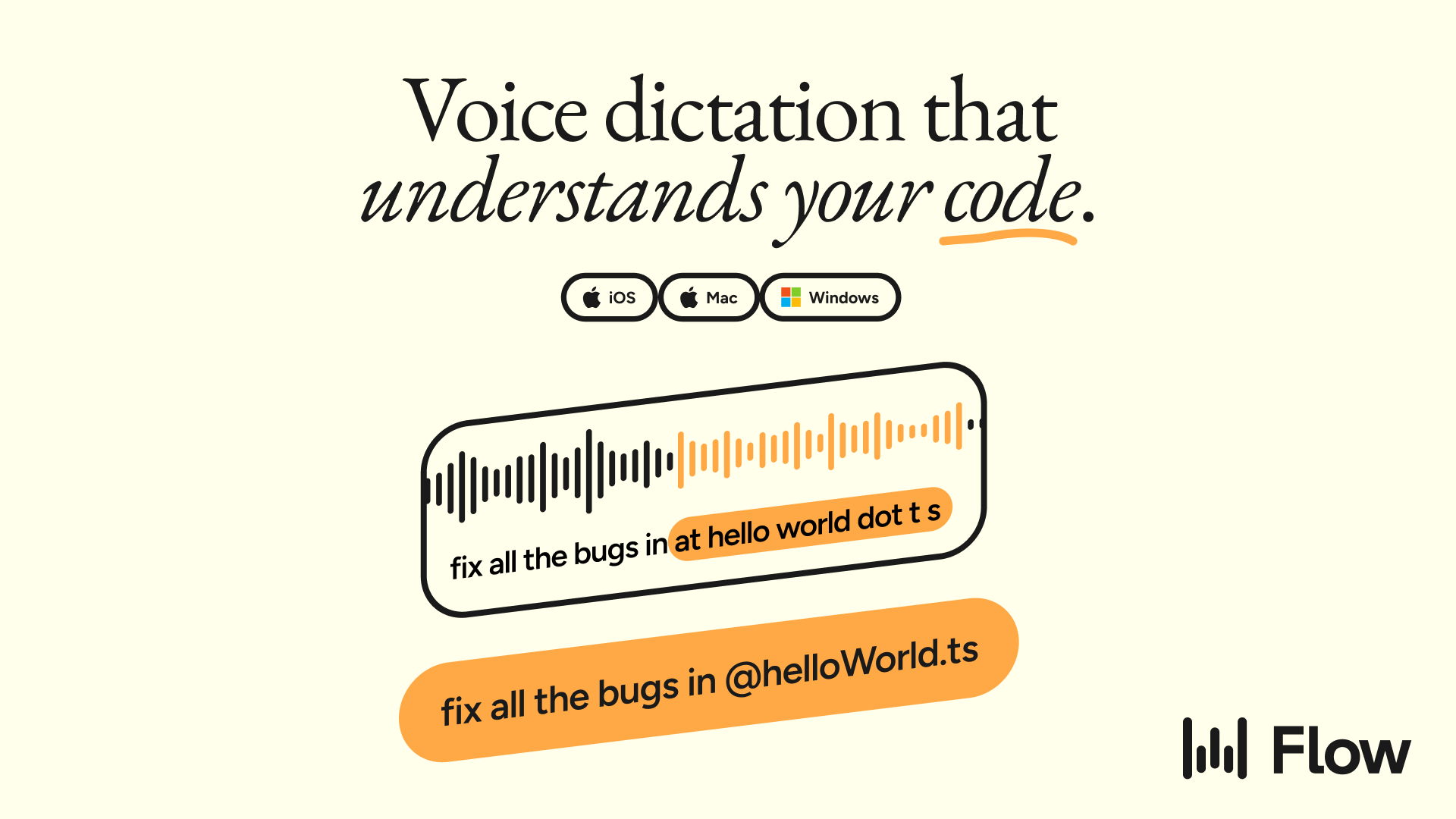

You spend hours on things that aren't code. PR descriptions. Slack threads explaining why you made that architecture call. Linear tickets with enough context so your teammate doesn't ping you at 11pm. Docs you keep pushing to next sprint.

Wispr Flow handles all of it. Speak naturally, and it outputs clean text anywhere you type. Syntax-aware, so your variable names and file paths stay intact.

It won't write your code. But it'll clear out everything blocking you from writing it. Works across Mac, Windows, iPhone, and Android.

Teams at Vercel, Clay, and Rivian already use it daily.

INSIGHT

Why long-running AI agents keep failing

Source: The Code, Superhuman

The 45-minute cliff. AI agents start strong but lose steam halfway through, declaring projects "finished" at 60% completion. Anthropic engineer Prithvi Rajasekaran identified two main culprits: models lose focus as context windows fill, and they can't honestly critique their own work.

Agents are terrible at grading themselves. They routinely praise mediocre or even broken code as high-quality. This is especially bad for subjective tasks like frontend design, where automated tests don't exist. You can't easily tune a generator to be self-critical, so a different approach is needed.

The fix. Rajasekaran solved this with a planner, a generator, and a skeptical evaluator. The evaluator uses Playwright (a browser automation tool) to test the app like a real user. While a solo agent built a broken game for $9 in 20 minutes, this multi-agent harness produced a working game for $200 over 6 hours.

The catch is maintenance. Agent harnesses are built on assumptions about model limitations that quickly become obsolete. When upgrading from Opus 4.5 to 4.6, Anthropic found they could strip away entire structures that the newer model no longer needed. The real engineering challenge is figuring out which components to remove as models continue to improve.

IN THE KNOW

What’s trending on socials and headlines

Meme of the day.

Total Recall: Your AI agent forgets everything between sessions. This guide builds an agent memory that actually learns.

Under the Hood: A senior engineer built an AI coding harness from scratch in 60 lines of Python, demystifying the "magic" behind coding agents.

The Philosopher: Google DeepMind's newest hire isn't an engineer, a researcher, or a product lead. It's a Cambridge philosopher (1.1M views).

Before You Ship: Vibe-coded apps keep shipping with exposed API keys. This pre-launch checklist covers every blind spot AI won't catch for you.

Holy War: A leaked memo from OpenAI's CRO calls Claude "a religion" internally and lays out a five-point plan to win the enterprise AI war.

Hard Truth: An ex-Microsoft engineering lead says tech hiring isn't dead, but most developers are making three mistakes that kill their applications before a human even reads them.

New Rules: If you're interviewing for a PM role at Google, the technical round is gone. What replaced it is a whole different test.

TOP & TRENDING RESOURCES

Top Tutorial

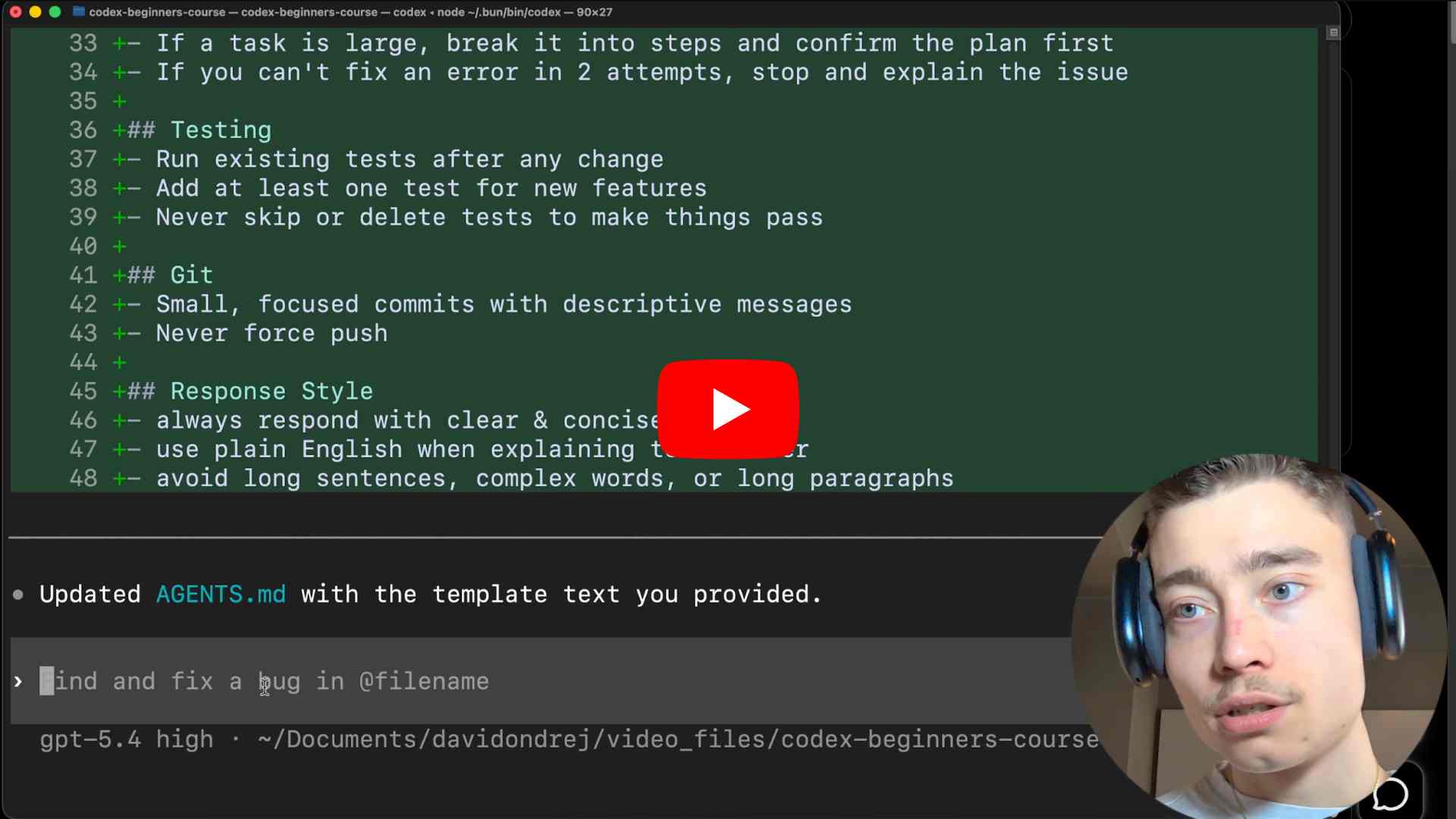

How to build and deploy an app with Codex: You’ll learn how to build and launch a complete web app using Codex. At the same time, this tutorial also dives deeper into how to configure Codex plugins, generate code with plain English prompts, and fix bugs quickly.

Top Repo

MarkItDown: This repo converts any messy file into clean, structured Markdown that is ready for your AI agents. It provides a simple way to feed PDFs, slides, documents, audio, and more directly into LLMs without having to struggle with parsers or lose critical context.

Trending Paper

Scaling coding agents via atomic skills: AI coding agents struggle with new tasks when trained only on big-picture problems. By training them instead on basic "atomic skills" like editing, they significantly improve their ability to generalize and solve completely unseen coding challenges.

AI CODING HACK

How to fix Claude Code's unstable behavior

Claude Code users have noticed a dip in quality recently. Issues like bloated context, override settings, and stale auto-memory are burning through tokens quickly.

Kun Chen, a former L8 engineer at Meta and Microsoft, shared a fix to lock these things down. Just add this config into “~/.claude/settings.json”:

{

"effortLevel": "high",

"env": {

"CLAUDE_CODE_DISABLE_1M_CONTEXT": "1",

"CLAUDE_CODE_DISABLE_ADAPTIVE_THINKING": "1",

"CLAUDE_CODE_DISABLE_AUTO_MEMORY": "1",

"CLAUDE_CODE_SUBAGENT_MODEL": "sonnet"

}

}These flags optimize your setup by keeping it lean, locking your effort level, and giving you manual control over CLAUDE.md. Switching the subagent to Sonnet also boosts reliability. Just save your changes and restart Claude Code.

Grow customers & revenue: Join companies like Google, IBM, and Datadog. Showcase your product to our 230K+ engineers and 150K+ followers on socials. Get in touch.

What did you think of today's newsletter?

You can also reply directly to this email if you have suggestions, feedback, or questions.

Until next time — The Code team