Welcome back. Ever talked to a laggy voice AI agent? It’s painful. Google just took a massive leap toward changing that with Gemini’s latest upgrade. Speed isn’t even the best part: you can now have much longer conversations thanks to its doubled memory.

Also: How to build agent evals, design CLIs for coding agents, and run deep research from your terminal.

Today’s Insights

Powerful new updates and hacks for devs

What in the world is comprehension debt?

How to make Claude Code read Reddit

Trending social posts, top repos, and more

TODAY IN PROGRAMMING

Google's Gemini 3.1 Flash Live raises the bar for voice agents: The search giant just shipped its most powerful real-time audio model to date, built specifically for voice-first agents that can manage complex tasks at scale. It boasts a 90.8% score on ComplexFuncBench Audio for multi-step function calling and leads Scale AI's Audio MultiChallenge for its ability to follow instructions even with real-world interruptions.

Stripe builds a CLI so agents can set up your infrastructure: The payments giant just launched Stripe Projects, a command-line tool that lets you set up hosting, databases, auth, and analytics with just a few commands. It handles everything from credentials and billing to plan upgrades right from the terminal, so you can stop jumping back and forth to the dashboard and focus on shipping. They've already teamed up with early partners like Vercel, Supabase, and Clerk. Click here to request access.

Chroma open-sources a 20B search agent that rivals frontier models: This AI data infrastructure company just dropped Context-1, a 20B-parameter agentic search model under the Apache 2.0 license. Built on OpenAI's gpt-oss-20B, it handles complex queries by breaking them down into sub-queries, retrieving data iteratively, and automatically pruning irrelevant info to fit a 32k-token limit. It rivals heavyweights like GPT-5.4 and Opus 4.6 on public benchmarks while being faster and cheaper.

INSIGHT

What in the world is comprehension debt?

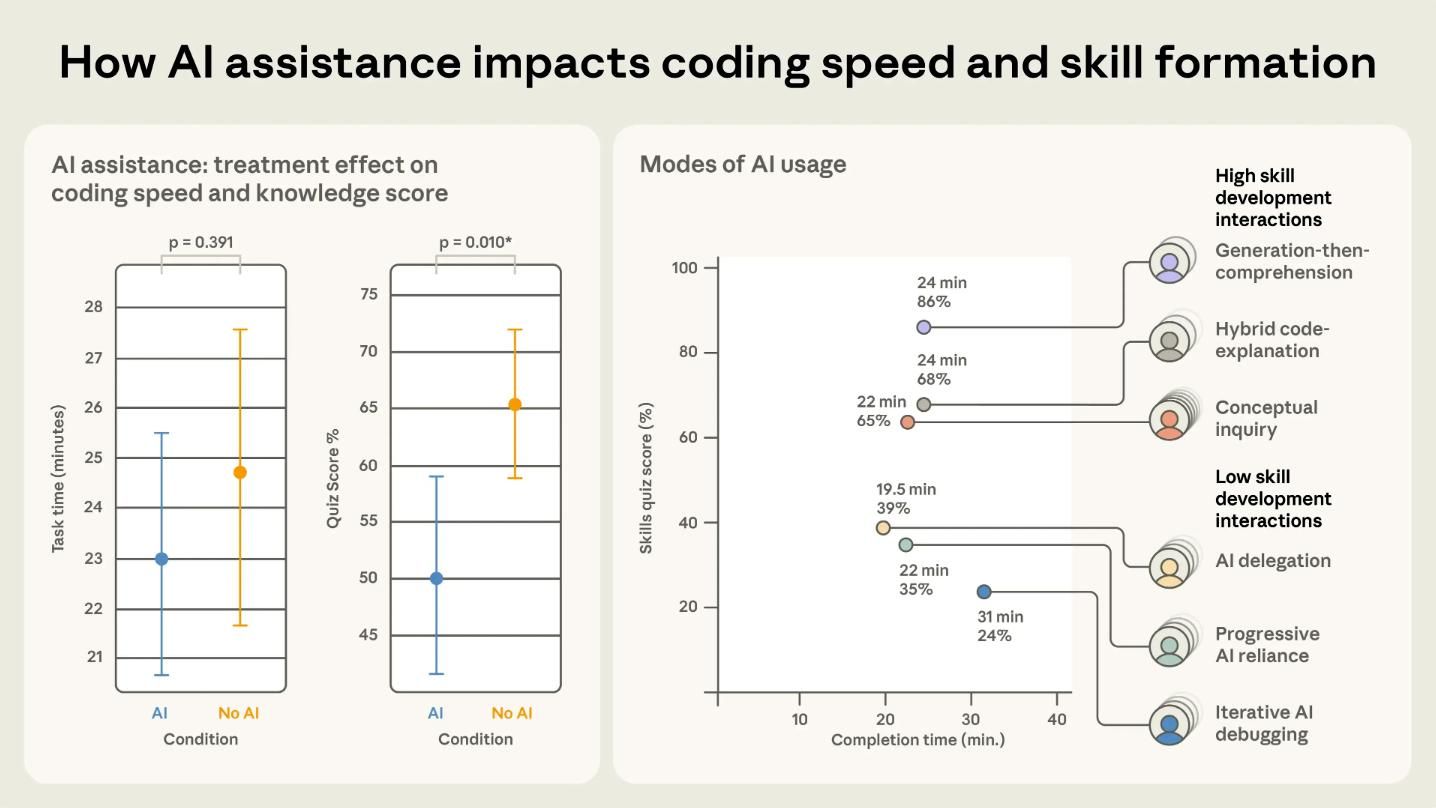

Faster than you can follow. Pull requests are merging faster than ever, codebases are expanding, and every productivity metric looks fantastic. But behind the scenes, many engineers are hitting a strange wall. They can no longer explain exactly why their own systems work the way they do. Addy Osmani, Director at Google Cloud AI, recently gave this problem a name: comprehension debt.

Clean code, shallow understanding. Unlike technical debt, which announces itself through slow builds and messy dependencies, comprehension debt is far more subtle. The code looks clean, the tests are green, and the reckoning only arrives at the worst possible moment — usually when something breaks and nobody can trace why.

The broken feedback loop. Code reviews used to be the primary engine for knowledge sharing. By reading a teammate's PR, you learned how the system evolved. But AI now generates code faster than any human can genuinely review, effectively breaking that loop. An Anthropic study of 52 engineers confirmed the impact: those using AI assistants scored significantly lower on comprehension tests, with the sharpest declines occurring in debugging performance.

Comprehension is the job now. AI handles the heavy lifting of writing code. The real engineering now happens in understanding what was written, why it was built that way, and whether those architectural choices were the right ones. If your team is feeling the weight of this shift, ex-Vercel engineer Matt Pocock recently shared a simple rule worth adopting.

IN THE KNOW

What’s trending on socials and headlines

Meme of the day.

DIY Vaccine: An AI consultant used ChatGPT, Gemini, and Grok to design a custom mRNA cancer vaccine for his dog. Sam Altman met him this week and has a big vision for where this goes next.

Broken Evals: Most teams throw hundreds of tests at their agents and call it progress. LangChain just dropped the eval framework they actually use instead.

CLI Researcher: A dev built a deep research agent that runs entirely from your terminal using Browserbase.

Agent-Proof CLIs: Most CLIs aren't built for AI agents. A former Microsoft PM wrote 7 principles for designing ones that work seamlessly with Claude Code and Codex.

Vibe-Coded Science: Google's Project Genie team needed a way to visualize world models, so they vibe-coded one in AI Studio.

GPU School: An ML research engineer just open-sourced 38 podcast episodes on GPU engineering fundamentals.

AI CODING HACK

How to make Claude Code read Reddit

Claude Code's WebFetch often hits a wall with sites like Reddit, returning a 403 error that can stall your research. To fix this, YK, an ex-Google engineer, shared a clever workaround using Claude Code's skills system. By creating a custom skill file, you can teach Claude to pivot to the Gemini CLI whenever it encounters a blocked domain.

Just install the Gemini CLI and authenticate:

npm install -g @google/gemini-cli

gemini # run once to auth with your Google accountCreate the skill directory and download the file:

mkdir -p ~/.claude/skills/reddit-fetch

curl -o ~/.claude/skills/reddit-fetch/SKILL.md \

https://raw.githubusercontent.com/ykdojo/claude-code-tips/main/skills/reddit-fetch/SKILL.mdThe next time you ask Claude Code to pull data from Reddit, it'll automatically trigger the skill to manage the tmux session, Gemini query, and output capture. Since skills load on demand, you only use tokens when Claude Code actually needs to access Reddit.

TOP & TRENDING RESOURCES

Top Tutorial

How Stripe’s team built their coding agent: This video breaks down how Stripe’s engineering team uses AI agents, or "minions," to automate their workflows. You'll see how teams can trigger these agents directly from Slack to write and deploy code at the same time. It also shows how these agents use machine-to-machine payments to independently purchase services and interact with third-party APIs.

Top Repo

Awesome Cursor Rules: This repo gives teams plug-and-play rules for dozens of stacks, helping you get sharper suggestions, write more consistent code, and ship faster across every project.

Trending Paper

Improving Composer through real-time RL (by Cursor): Earlier this week, Cursor dropped a technical report on how they trained Composer 2. Now, they've shared more research into their training process for new checkpoints. With real-time RL, they’re able to ship updated versions of the model every five hours.

Grow customers & revenue: Join companies like Google, IBM, and Datadog. Showcase your product to our 200K+ engineers and 100K+ followers on socials. Get in touch.

What did you think of today's newsletter?

You can also reply directly to this email if you have suggestions, feedback, or questions.

Until next time — The Code team