Welcome back. Chinese AI labs are having a massive year so far — MiniMax just released a model that reportedly trained itself. Their new M2.7 model completed over 100 loops of autonomous self-improvement without any human intervention. The best part? It's closing in on frontier AI models on coding benchmarks.

Also: Anthropic engineer reveals how they design Skills, OpenAI's million dollar challenge, and building a real feature with Claude Code.

Today’s Insights

Powerful new updates and hacks for devs

How to make sense of harness engineering

How to automate PRs in Cursor (hack)

Trending social posts, top repos, and more

TODAY IN PROGRAMMING

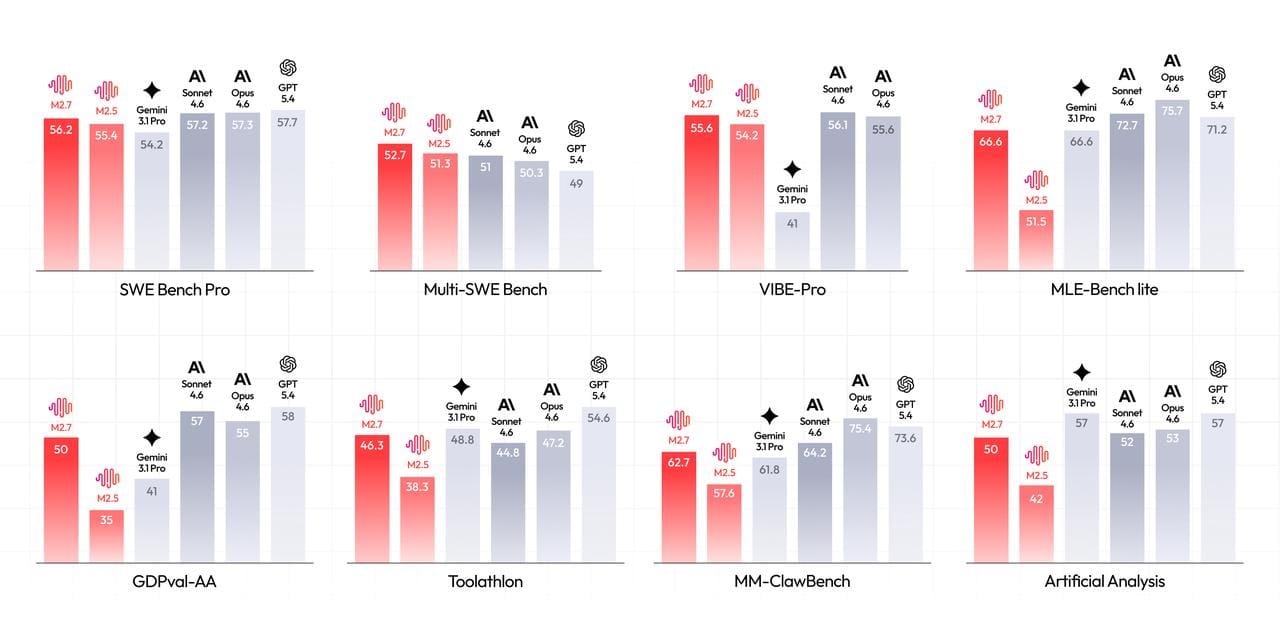

MiniMax rivals top coding models with self-evolving AI: The Chinese AI lab just released M2.7, its first model to actively participate in its own training process. It scored 56.22% on SWE-Pro, matching GPT-5.3-Codex, and hit 55.6% on VIBE-Pro for end-to-end project delivery across web, Android, and iOS. The model supports native multi-agent collaboration and maintains 97% skill adherence across more than 40 complex tools. MiniMax reports that it has cut production incident recovery time to under three minutes in some cases. Try it now.

OpenAI's new models bring GPT-5.4 power to fast coding workflows: The ChatGPT maker just shipped two smaller models designed to bring GPT-5.4's power to latency-sensitive workloads. GPT-5.4 mini runs over twice as fast as GPT-5 mini and nearly matches the full model's performance on SWE-Bench Pro, making it ideal for coding assistants, subagents, and computer-use workflows. GPT-5.4 nano is an API-only version designed for lightweight subtasks like classification and data extraction. Watch it in action.

Anthropic turns Claude Cowork into an always-on desktop assistant: Anthropic just launched Dispatch, a new research preview within Claude Cowork that maintains a persistent conversation on your desktop. This allows you to assign tasks to Claude from your phone and return to completed work later. For security, Claude runs code in a local sandbox and requires explicit approval before taking action. The feature is currently rolling out to Max subscribers, with Pro access expected soon.

INSIGHT

How to make sense of harness engineering

Source: The Code, Superhuman

One rule changed everything. In February, OpenAI shared how a team of just three engineers leveraged Codex agents to build a million-line production app in only five months. The project involved roughly 1,500 PRs without a single line of hand-written code. Instead, engineers focused on designing environments and feedback loops, while the agents handled the rest. This popularized "harness engineering," which is now a cornerstone of AI engineering.

So what is it? A harness is the complete ecosystem built around a model. It includes constraints, linters, documentation, and feedback loops that turn raw intelligence into a reliable tool. The big takeaway? When an agent struggles, you don't just keep tweaking the prompt. You look at what's missing in its environment and fix it there.

The proof keeps stacking up. LangChain’s coding agent climbed from the bottom of the pack to the top five on Terminal Bench 2.0 simply by redesigning the harness while using the same underlying model. Similarly, Anthropic kept agents coherent over long sessions using structured files and JSON lists. These results suggest that environment design is often more critical than the specific model being used.

Where the real moat lives. It’s all about the harness. The real value lies in building the feedback loops and architectural guardrails that make agents reliable at scale. If you want to see harness engineering in practice, the Claude Code team at Anthropic recently shared how they built their system. Their breakdown of tool design and action spaces is a must-read.

IN THE KNOW

What’s trending on socials and headlines

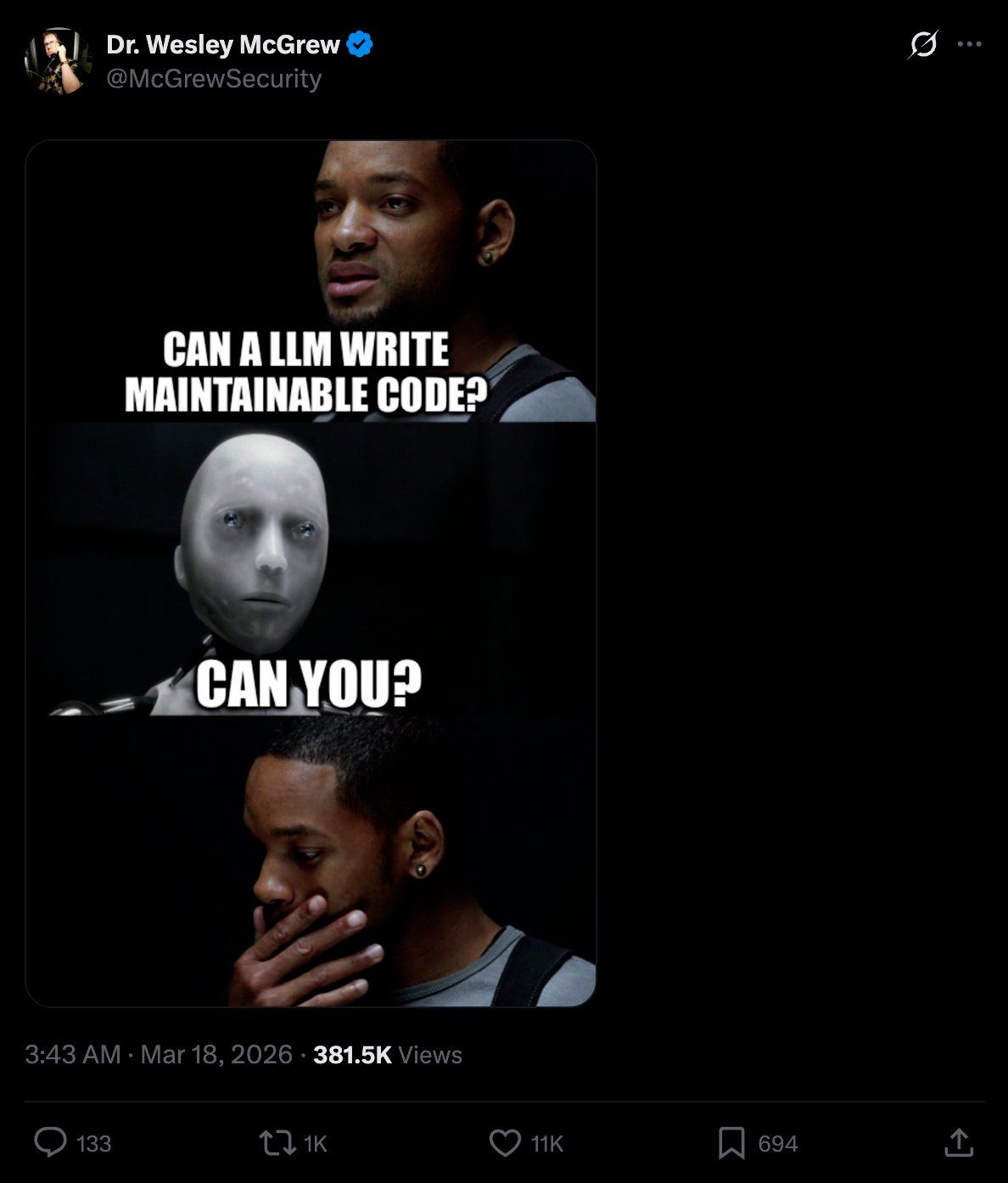

Meme of the day.

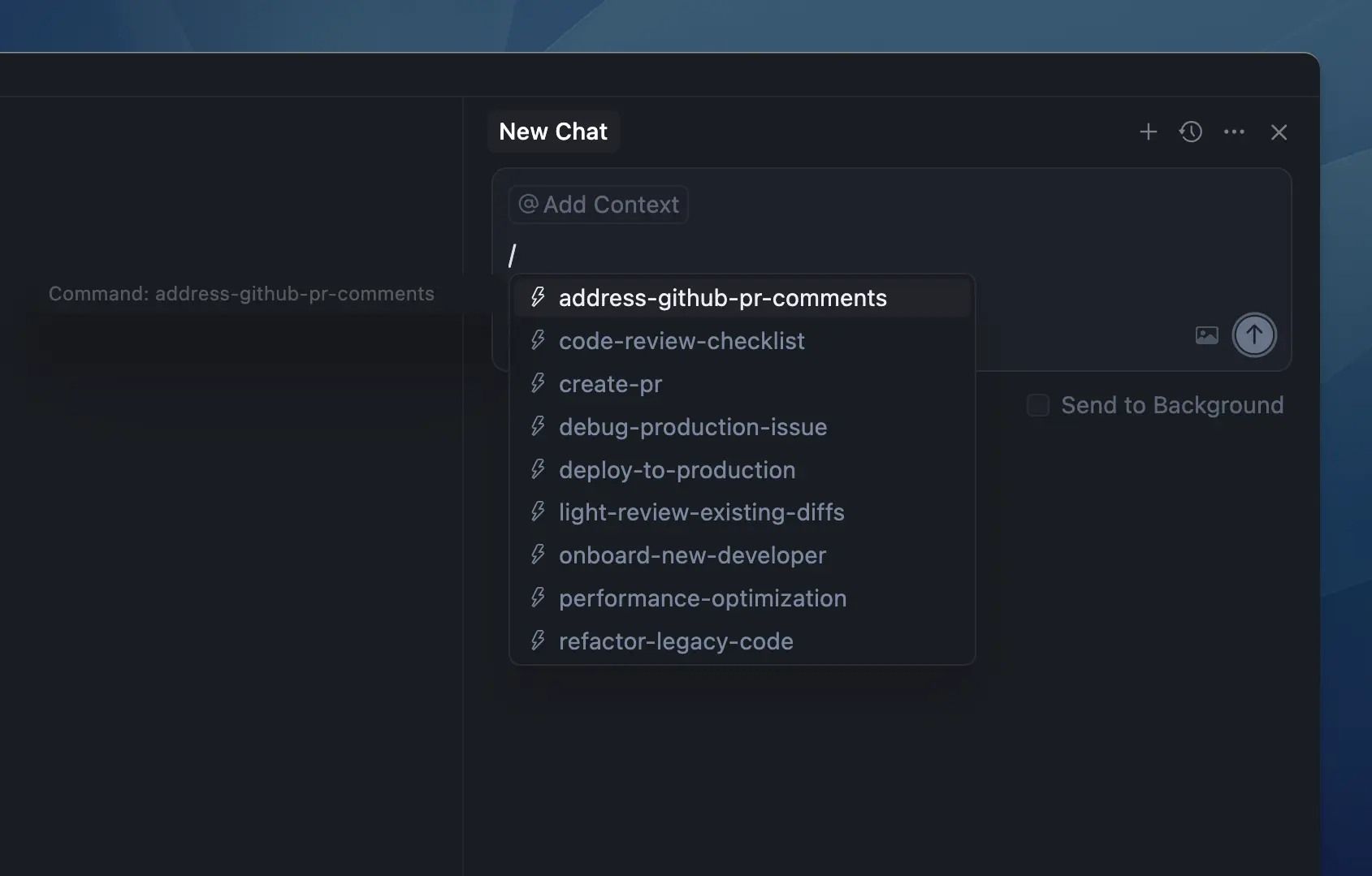

Skill Tree: Anthropic has hundreds of Skills running inside Claude Code. One of their engineers just shared the 9 types of Skills worth building and the patterns behind them (5.6M views).

Prompt Kit: Notion's co-founder just shared the exact prompt snippets he uses for AI coding, from forcing simpler designs to getting agents to actually debug root causes.

Hook Ban: Factory AI banned React's useEffect entirely after one too many production bugs. Here are the 5 replacement patterns they use instead.

Parameter Golf: OpenAI just dropped a new challenge to train the best language model that fits in 16MB and runs in under 10 minutes. Prize pool: $1M in compute.

Data Labor: Every time you clicked "I'm not a robot," you were labeling training data for Google Maps and Waymo — almost 500,000 hours of it daily. This viral thread breaks down the full story (13M views).

Zero Features: Lovable landed Microsoft, Uber, and Zendesk without shipping a single enterprise feature. Their founding engineer shared the one constraint that made it work.

Agent Blueprint: Google just published 5 design patterns for building agent skills, with working ADK code for each (1.3M views).

AI CODING HACK

How to automate PRs in Cursor with one command

Every Pull Request follows the same repetitive cycle: diff, commit, push, and open. You likely repeat this process a dozen times a day, using the same sequence of git commands.

Cursor's Head of Education shared a hack that lets you collapse this entire workflow into a single /pr command. To get started, create the “.cursor/commands/pr.md” file with the following prompt:

Create a pull request for the current changes.

1. Look at the staged and unstaged changes with `git diff`

2. Write a clear commit message based on what changed

3. Commit and push to the current branch

4. Use `gh pr create` to open a pull request with title/description

5. Return the PR URL when doneJust type /pr into the Cursor agent input to kick off the workflow, and the agent will handle everything for you.

TOP & TRENDING RESOURCES

Top Tutorial

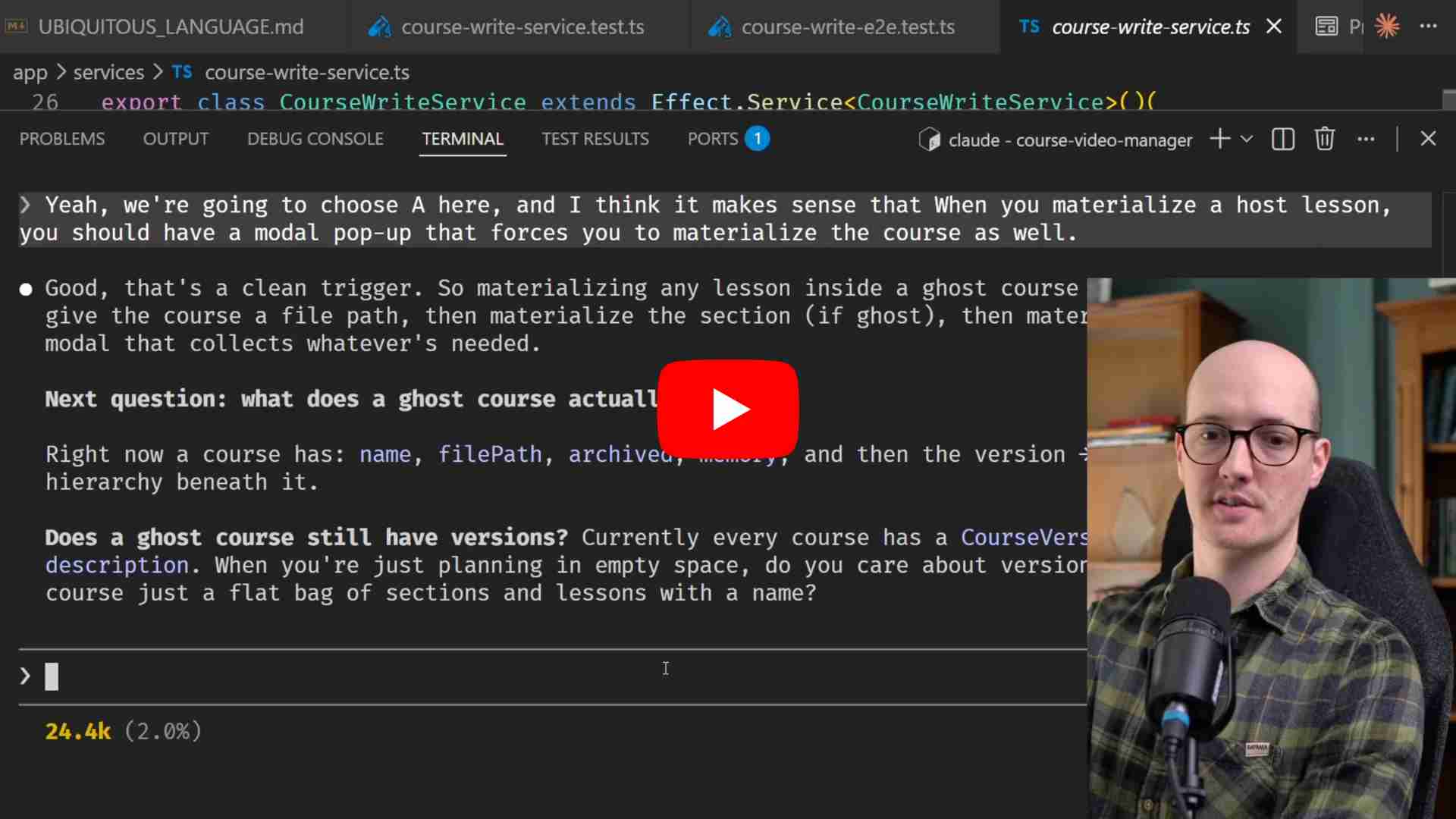

How to build a real feature with Claude Code: This tutorial covers how to build a practical workflow for creating real-world features with Claude Code. It demonstrates how to brainstorm ideas, draft requirements, and open GitHub issues, so automated AI agents can handle the heavy lifting while you stay focused on testing and quality control.

Top Repo

Awesome Codex Subagents: Following OpenAI's recent launch of subagents in Codex, one dev created this repo featuring a collection of over 130+ specialized subagents designed for specific development tasks.

Trending Paper

What 81k people want from AI (by Anthropic): Public discussions about AI often feel too abstract, so researchers surveyed 80,000 Claude users about their actual needs. The AI lab found that while people are hopeful AI will improve their daily lives, these aspirations are deeply connected to real concerns regarding job security and reliability.

Grow customers & revenue: Join companies like Google, IBM, and Datadog. Showcase your product to our 200K+ engineers and 100K+ followers on socials. Get in touch.

Whenever you’re ready to take the next step

What did you think of today's newsletter?

You can also reply directly to this email if you have suggestions, feedback, or questions.

Until next time — The Code team