Welcome back. Powerful computing is making its way into our pockets. OpenAI just dropped an update that lets you code from your phone while your laptop does the heavy lifting. And there’s a lot more for developers in this issue.

Also: How Airbnb engineers ship 64% of PRs with agents, an ex-Google engineer explains what's inside an LLM, and Anthropic explains the AI race between the US and China (research).

Today’s Insights

Powerful new updates and hacks for devs

Why is agentic coding being called a trap

How to speed up Claude's first API call

Trending social posts, top repos, and more

TODAY IN PROGRAMMING

OpenAI brings its coding agent to mobile: The ChatGPT maker just shipped a mobile preview of its coding agent. This lets you review diffs, approve commands, and guide active sessions right from your phone while the heavy lifting stays on your laptop. They have also rolled out hooks for scanning prompts for secrets or adjusting settings for specific repos. Plus, new scoped access tokens now allow teams to integrate Codex directly into their CI workflows.

xAI ships its first CLI coding agent: The Musk-led AI lab just dropped an early beta of Grok Build, a coding agent and CLI designed for high-level professional work. SuperGrok Heavy subscribers can install it with a simple curl command. Once installed, you can use plan mode to review or adjust every step before the changes are applied as clean diffs. It even handles massive tasks by delegating them to parallel subagents in their own worktrees.

Prime Intellect's AI agents beat human records at AI research: The SF-based AI lab just ran Codex and Claude Code autonomously on the nanoGPT speedrun for two weeks. Over 10,000 runs on idle compute, both agents beat the human baseline, with Opus setting a new record of 2,930 steps. The catch is that the agents still relied heavily on existing human research and couldn't quite come up with original ideas on their own.

PRESENTED BY BITDRIFT

Mobile app experiences reflect reality. Reality is messy. Bad signal, mid-onboarding drop-offs, force quits on a device you've never tested. Every other observability tool relies on heavy sampling, leaving you with tunnel vision.

bitdrift captures 100% of data, unsampled, in real time across 1B+ installs. And with our Public API and agent skills, your AI agents can query that reality directly.

Don't let them fix the wrong things. Query reality with bitdrift: mobile observability built for the real world.

INSIGHT

Why is agentic coding being called a trap

Source: The Code, Superhuman

The orchestrator pitch is hitting a wall. AI's biggest engineering trend, where humans plan and AI builds, is seeing major pushback from senior devs and model providers alike. This shift gained momentum this month after a viral essay by veteran developer Lars Faye topped Hacker News. He calls agentic coding a "trap" that eventually collapses under its own weight.

The supervision paradox. Faye's argument is that the workflow needs the exact coding skills that heavy AI use tends to erode. Anthropic itself flagged the same paradox in a study, which showed a 47% drop in debugging ability. Even Django co-creator Simon Willison admits he no longer has a clear mental map of the apps he builds using agents.

A more careful position. The essay doesn't suggest moving away from these tools entirely. Faye uses LLMs himself for generating specs and handling ad-hoc tasks, acknowledging the real productivity gains. His specific concern lies with the "orchestration" model, where AI handles the implementation, and humans lose touch with the actual codebase.

Where the line is drawn. Using AI for specs and drafts works well for most engineers, but outsourcing the actual implementation leads to skill atrophy. The real question is: how do you supervise AI effectively if you aren't doing the coding it’s supposed to replace?

IN THE KNOW

What’s trending on socials and headlines

Meme of the day.

Year in Three Days: Shopify's former CTO joined an AI-native company where an agent ran his entire first day. Three days in, he feels like he's been there a year and wants to rename "onboarding" (~1M views).

PR Machine: Airbnb is now shipping 64% of production PRs with agents, and two of their engineers just walked through the 15-minute playbook (5.7K bookmarks).

LLM Internals: Ex-Google engineer breaks down what's really happening inside an LLM and why every dev shipping with them should stop treating it like a black box.

Hands Off: Anthropic and OpenAI both shipped a new /goal command that lets agents skip the approve-every-step grind. Here's how to use it.

ML Path: This 12-stage roadmap covers every skill you'd need to break into ML engineering.

MCP Overload: Connecting too many MCPs at once creates a context bloat problem that tanks accuracy. This tutorial shares the fix.

Full Send: This developer's Codex workflow shows how far it pushes autonomous coding, with full perms and parallel worktrees stacked on every prompt.

Major Miss: Meta's Head of AI, Alex Wang, opened up on why Llama 4 fell behind, and how the new Super Intelligence Labs plans to catch up (1.2K bookmarks).

AI CODING HACK

How to speed up Claude's first API call

When your system prompt is massive, the first API call can really drag. The Anthropic Claude team shared a quick fix: send a throwaway request with “max_tokens=0” before your actual prompt. This caches your system prompt so the next real call hits a warm cache immediately. According to their benchmarks, this makes the first response about 52% faster for a 160k-token system prompt.

client.messages.create(

model="claude-opus-4-7",

max_tokens=0,

system=[{

"type": "text",

"text": BIG_SYSTEM_PROMPT,

"cache_control": {"type": "ephemeral"},

}],

messages=[{"role": "user", "content": "warmup"}],

)Apply “cache_control” to the system prompt rather than the placeholder; otherwise, you'll cache the wrong data, and real requests will miss. Since the cache only lasts five minutes, set up a timer to re-trigger it if your traffic is slow.

P.S. You can find 50+ AI coding hacks here.

TOP & TRENDING RESOURCES

Top Tutorial

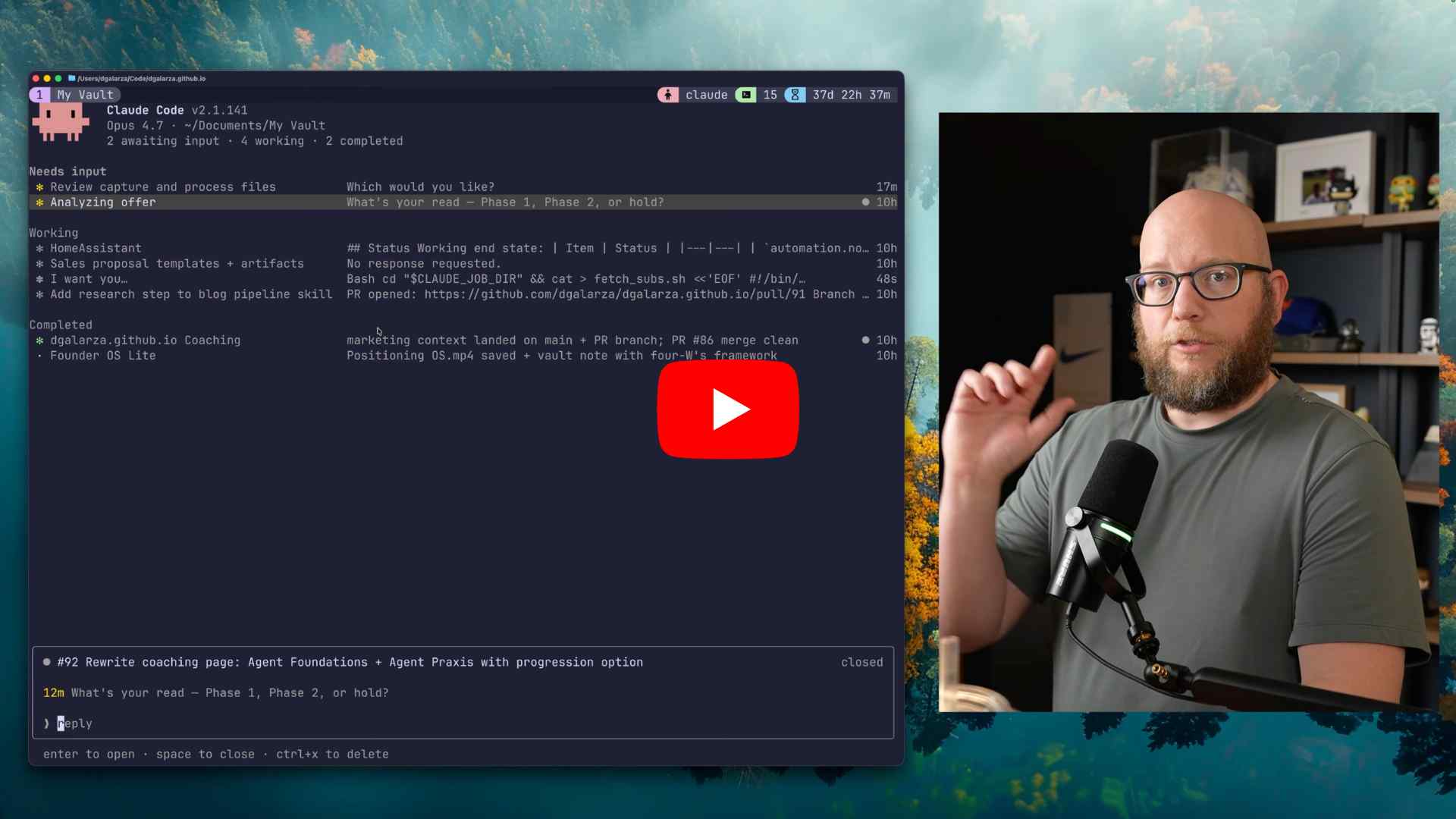

How to use Claude Code’s agent view: This tutorial introduces you to Anthropic’s new Claude Code "agent view" for managing multiple background AI sessions from a single interface. You will learn to monitor session statuses, background existing terminal tasks, reorder or pin projects, and control agents efficiently using essential keyboard shortcuts.

Top Tool

Claudy: Claude Code is powerful, but managing multiple projects in terminal tabs is a mess. Claudy simplifies this with a native macOS app by offering multi-session management, auto account switching, and quick access to Draft Commits, MCPs, and Skills.

Top Repo

OpenHuman (8.4K ⭐): A personal AI assistant that integrates deeply with your tools and workflows. It runs privately on your machine, building a compressed memory graph from your emails, docs, code, and chats to give your agent rich, persistent context.

Trending Paper

Two scenarios for global AI leadership (by Anthropic): This paper argues that global security and civil rights depend on whether democracies or authoritarian governments take the lead in AI. It suggests that by tightening hardware controls and stopping model theft, the US can stay one to two years ahead of China by 2028.

Grow customers & revenue: Join companies like Google, IBM, and Datadog. Showcase your product to our 280K+ engineers and 160K+ followers on socials. Get in touch.

What did you think of today's newsletter?

You can also reply directly to this email if you have suggestions, feedback, or questions.

Until next time — The Code team