Welcome back. When GitHub goes down, the world stops shipping. That’s what happened yesterday when a major outage blocked pull requests and stalled development for hours. Now that systems are back online, we have exciting new updates from OpenAI and Devin you may want to try today.

Today: OpenAI’s open-source spec for Codex orchestration, the 15 startup ideas YC wants to fund this summer, and why OpenAI killed its AGI clause the morning Musk's trial started.

Today’s Insights

Powerful new updates and hacks for devs

The end of the specialist engineer

How to review Codex code without missing bugs

Trending social posts, top repos, and more

TODAY IN PROGRAMMING

OpenAI releases a spec to automate Codex work through Linear: The ChatGPT maker released Symphony after their team struggled to manage too many coding sessions on an internal project. They solved this by putting the issue tracker in charge, assigning an agent to every open ticket that runs until a human reviews the code. Some teams saw a 500% spike in merged PRs, and the spec has already gained over 17K GitHub stars.

Cognition brings Devin into the terminal: The AI agent startup just shipped a local coding agent that lives in your shell with full access to your codebase and tools. It’s compatible with any frontier model, from Opus 4.7 and GPT-5.5 to their own SWE-1.6. When a project becomes too much for your laptop to handle, you can hand the session off to a cloud agent that keeps running in its own sandbox.

China rejects Meta's $2B AI agent startup acquisition: China's state planners just blocked Meta's acquisition of Manus, a startup known for its general-purpose AI agents. Meta wanted to integrate Manus's coding, market research, and data analysis automation directly into its apps. However, Chinese regulators have shut it down, citing foreign investment rules that keep the AI startup out of overseas hands.

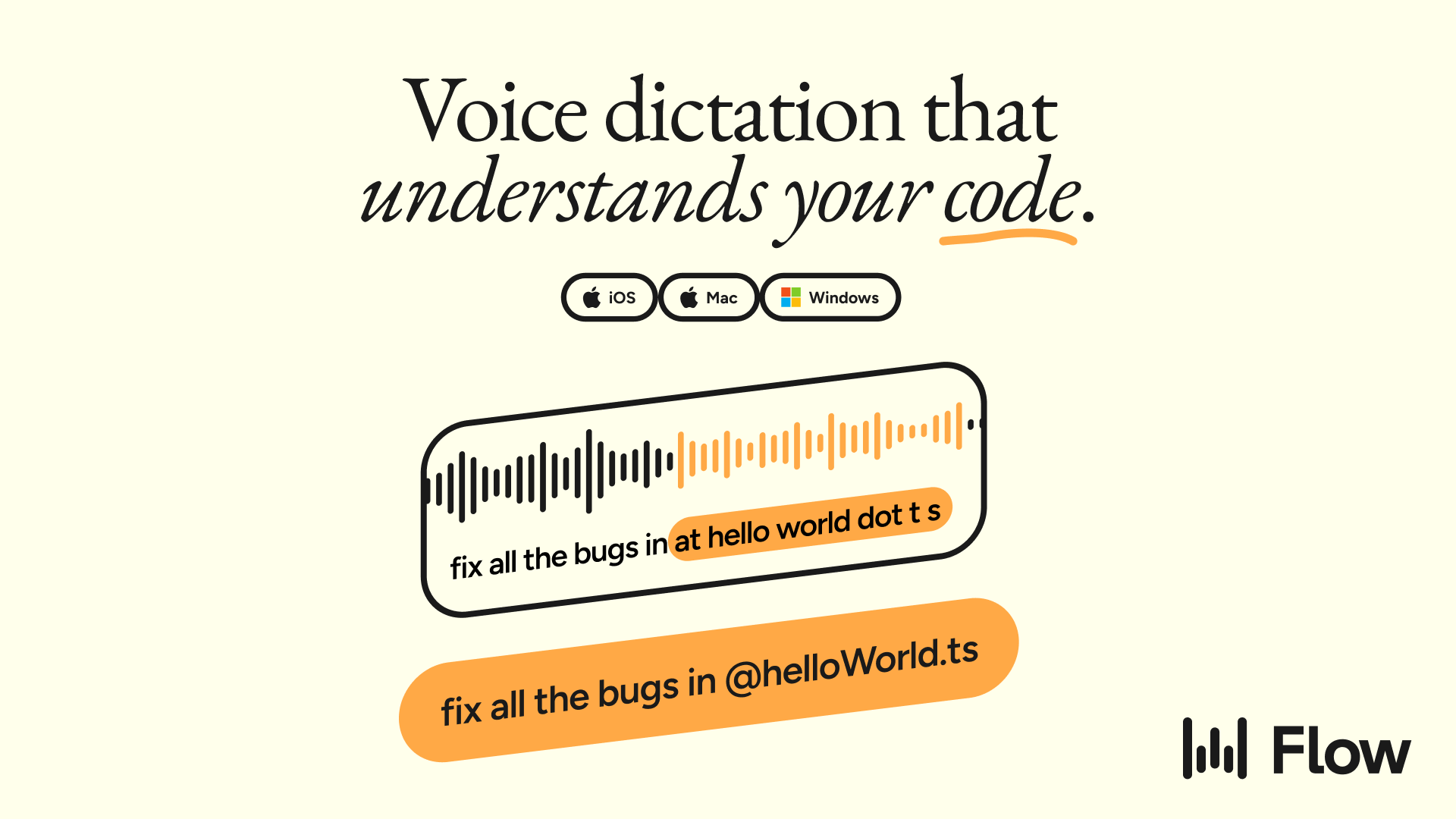

PRESENTED BY WISPR

Your dev stack got an AI upgrade everywhere except the input layer. You're still typing every prompt, every ticket, every review comment by hand.

Wispr Flow closes that gap. Dictate into Cursor, VS Code, Slack, Linear, or anywhere else you work. It's syntax-aware: camelCase, snake_case, acronyms, and file names all come through clean. Mention a file in Cursor or Windsurf, and it auto-tags.

It's the voice layer for an AI-native workflow. Speak your intent. Your tools do the rest.

Available on Mac, Windows, iPhone, and Android. Used by millions of developers, including teams at OpenAI and Mercury.

INSIGHT

This might be the end of the specialist engineers. So what’s next?

Source: The Code, Superhuman

An uncomfortable argument. DeepLearning AI founder and former Google Brain head Andrew Ng says AI-native teams are operating on a completely different level. The specialized teams that companies spent a decade building are becoming a liability because coding is now faster and cheaper — but traditional workflows haven't kept up.

The math stops mathing. As coding agents allow small teams to punch way above their weight, traditional engineer-to-PM ratios are becoming obsolete. Andrew Ng points out that even a 1:1 ratio falls short because constant handoffs just slow things down. This leaves leaders with one big question: Who do you actually hire to lead a team that moves this fast?

From the trenches. The hiring profile is shifting. Alvin Sng, an engineer at Factory AI, recently shared his hiring shortlist. He favors ex-founders returning to IC (Individual Contributor) roles, generalists who can debug the entire stack, and engineers turned PMs. Even LinkedIn is doubling down on this trend by replacing its APM program with a "Full Stack Builder" track that merges coding, design, and PM skills into a single role.

The engineering bet. Deep expertise used to be the priority when shipping took forever, but now the smart money is on versatility. The future belongs to engineers who think like PMs and PMs who can actually code. While specialists are still needed, they’re no longer the ones calling the shots on what gets built.

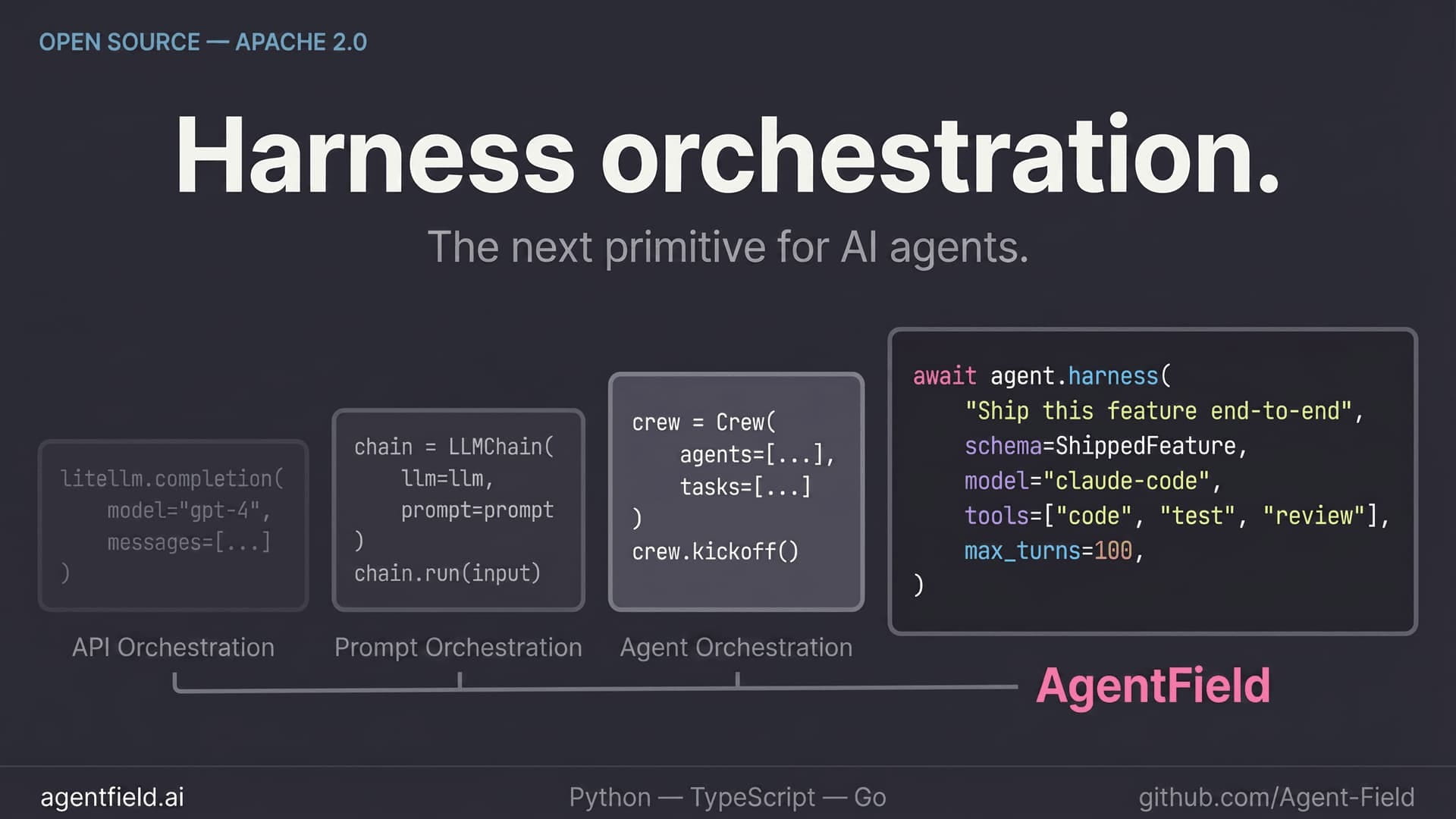

PRESENTED BY AGENTFIELD

Agents-as-APIs wasn’t enough. AgentField’s harness primitive composes your favorite harness systems into powerful, autonomous software.

Fork and use SWE-AF (a 100+ harness software factory) or deploy cloud-security agents out of the box. Deep dive why harnesses beat agents-as-APIs in our recent blog post.

Apache 2.0. Python / TypeScript / Go.

IN THE KNOW

What’s trending on socials and headlines

Meme of the day.

Context Rot: MIT researchers cracked why LLMs get dumber as context grows, even inside the window. Here's their workaround.

541 bookmarks

YC's Wishlist: YC just dropped its Summer 2026 list of 15 startups it wants to fund. Not a single consumer idea made the cut.

2 million views

PR Triage: A Vercel engineer's internal tool turns massive PRs into semantic groups, flagging only the parts that need a human eye.

462 likes

Anthropic Receipts: Someone built a new site that logs every Claude outage, lawsuit, and policy U-turn in one timeline.

1,800 likes

Off-Grid Agent: A Hugging Face engineer built an AI agent that runs entirely inside Chrome, fully local with no servers.

4,900 likes

Lock-in Trap: A computer scientist calls picking one LLM provider a 10/10 regret. His routing setup swaps models on demand.

450 bookmarks

AGI Clause: OpenAI quietly removed the AGI clause and Microsoft exclusivity from its charter, the morning Musk's trial began. This post breaks down why the timing is suspicious.

1.1 million views

AI CODING HACK

How to review Codex code without missing bugs

Reviewing Codex-generated code with the same agent that wrote it creates a feedback loop where it just nods along. Because the reviewer shares the same context as the writer, it confirms decisions rather than questioning them.

OpenAI's documentation suggests using parallel subagents to fix this. To start, run this prompt in any Codex session:

Review this branch with parallel subagents. Spawn one subagent for security risks, one for test gaps, and one for maintainability.Codex runs three parallel agents, each with its own context window, to keep the main thread clean. You'll get three concise summaries instead of a terminal flooded with logs. Since Codex won't do this automatically, you must explicitly use terms like "spawn" or "delegate in parallel" to avoid serial reviews.

To save on costs, OpenAI suggests using gpt-5.4-mini for these subagents, which you can configure in your prompt or the .codex/config.toml file.

TOP & TRENDING RESOURCES

Top Tutorial

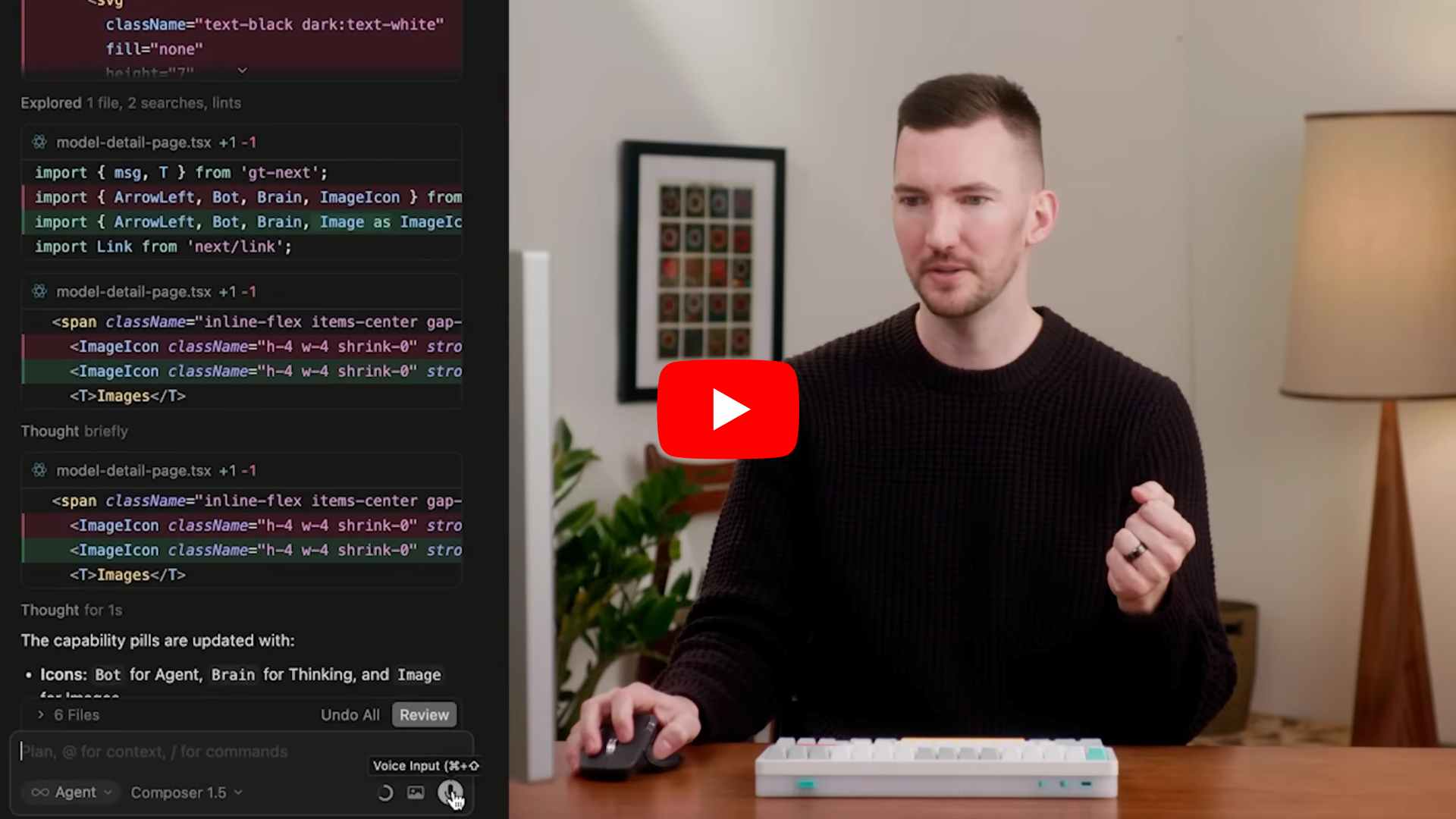

How to build software with agents using Cursor: This tutorial shows developers how to build software with Cursor's AI coding agent. You'll learn how to prompt agents to navigate codebases, plan and build new features, debug errors, review code, and customize your workflow with specific rules and skills.

Top Tool

Entelligence AI: An AI-powered platform designed to catch more bugs and speed up code reviews for software engineering teams.

Top Repo

Agent of Empires: A session manager for Linux and macOS that lets you run multiple AI coding agents simultaneously. Access it via terminal or browser to manage agents across different branches in isolated sessions with optional Docker sandboxing.

Trending Paper

How do AI Agents spend your money (by Google): This research paper looks into the massive, unpredictable token costs of AI coding agents and whether they can estimate expenses upfront. It shows that agent tasks are uniquely expensive, and models consistently fail to accurately predict their own token usage.

Grow customers & revenue: Join companies like Google, IBM, and Datadog. Showcase your product to our 250K+ engineers and 150K+ followers on socials. Get in touch.

What did you think of today's newsletter?

You can also reply directly to this email if you have suggestions, feedback, or questions.

Until next time — The Code team